Road incidents that involve even the mere possibility of a self-driving vehicle hitting a pedestrian scare away consumers. Recent tragic events like an Uber car killing a woman have motivated OEMs to search for new means to ensure safety.

According to a report by Deloitte, users care more about their safety than about all the cool features autonomous cars have to offer. It looks like now is the right time to stop and rethink the very concept of self-driving cars, focusing on how to make self-driving cars safer before cars even hit the road.

Intellias has written an overview of the most promising features that can help increase security in automotive and make the autonomous driving safer for everyone in the eyes of consumers.

VR simulation saves lives and leads to security in automotive

According to annual global road crash statistics, on average 3,287 people die per day in road crashes. Sadly, autonomous vehicles tested on the roads have contributed to these statistics. The good news, though, is that there’s another way to test self-driving vehicles without endangering people in or around them.

Virtual simulation is a solution to the problem, increasing security in automotive and allowing researchers to run vehicles through different scenarios indoors. Virtual test drives show how an autonomous vehicle behaves under various conditions and in different cases. These conditions even include collisions, which are hard to test in the real world.

Waymo, for example, actively uses computer simulation to test its autonomous vehicles. The company builds full virtual city models and tests its self-driving software on them. Waymo collects data on virtual rides daily. Afterward, the company pours this data into its 600 minivans that are tested in the real world to make the vehicles safe on public roads.

Nvidia is another big adopter of virtual simulation. In fact, in response to the Uber accident, Nvidia has stopped testing autonomous cars on public roads. At the company’s GPU Technology Conference, Nvidia presented its own simulator for autonomous car testing to increase security in vehicles.

The Nvidia Drive Constellation platform is a cloud-based system for photorealistic simulation. It tests autonomous car software through virtual simulations. The technology allows self-driving vehicles to run millions of miles in datacenters, not on public roads.

Safety is the most important feature of a self-driving car. It’s imperative that a car operate safely, even when things go wrong.

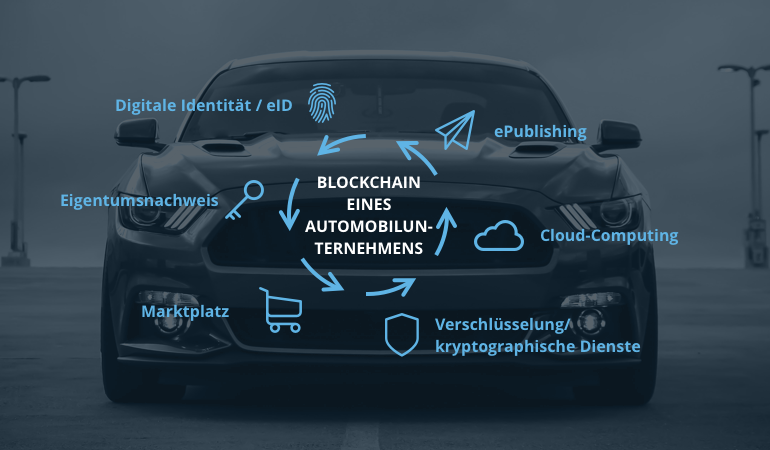

Blockchain prevents hacking attacks and solves the issue of trust and security in vehicles

Although blockchain is the technology that enables cryptocurrency, it’s also perfectly suited for the auto industry. In fact, it’s currently one of the hottest automotive trends. Take Toyota and Porsche, for example — both companies are already working with blockchain. The technology offers many benefits for autonomous cars.

The blockchain can increase the safety of self-driving cars. A hacking attack that involves taking control of the vehicle is every driver’s nightmare. No car owner wants to hit a wall because of bad cybersecurity. This is where blockchain technology can help, with its decentralized system of verification by consensus. To top it off, the blockchain can also increase the safety of self-driving cars in terms of cybersecurity and improve cybersecurity in automotive industry.

The problem is that since autonomous cars depend on software as much as hardware, they are vulnerable to cyber attacks. To date, there have been no hostile hacking attacks on autonomous vehicles. But that doesn’t mean that there won’t be any in future when driverless technology hits the mass market. In fact, researchers from the University of South Carolina have proved that it’s possible to trick Tesla’s sensors into not seeing objects around them.

The blockchain is a way to deal with the hacking threat. Compared to traditional databases, a decentralized blockchain is more secure. It protects big data on which the software for self-driving cars relies. The blockchain helps to verify data and store it securely. Moreover, a distributed blockchain system is immutable, which means it’s impossible to hack.

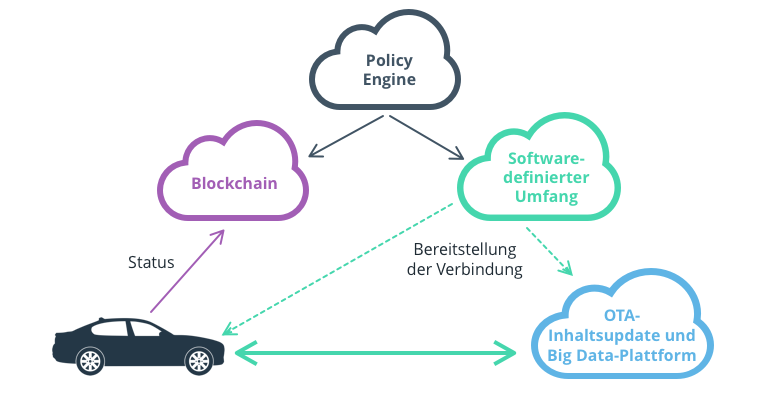

Security in automotive: Software-defined perimeter secures vehicle-to-cloud communication

A software-defined perimeter (SDP) is another way to make self-driving cars safer. This framework was developed by the Cloud Security Alliance to create secure networks for enterprise use. Gartner has included SDP in its Top Technologies for Security list. An SDP does what traditional perimeters can’t do. It verifies the identity of a device before granting access to the application infrastructure.

In other words, the framework restricts network access and connections between elements. An SDP also hides application infrastructure. Protected by the architecture, infrastructure elements have no visible IP or DNS information. Allowed devices are only able to access data base through a temporary cryptographic connection. This means that an SDP can protect autonomous vehicles from network-based attacks.

Blockchain technology and SDP perfectly complement each other to increase the cybersecurity of self-driving cars. The blockchain allows secure messaging by encrypting data and a software-defined perimeter protects the vehicle’s communications with cloud applications.

Social behavior algorithms define how safe autonomous vehicles are

Although autonomous vehicles aren’t easily distracted like human drivers, they lack human intuition and social behavior. To make autonomous driving safer for everyone, vehicles have to think like human drivers. This means that AI-operated cars should not only obey the rules but should also be able to break them when needed. If someone’s life is at stake, a car has to cross the double line.

The MIT Media Lab has made the Moral Machine website to illustrate the difficult ethical problems that autonomous vehicles can face. Making moral decisions is easy for humans, but programming complex behavior in self-driving cars is a real challenge.

Remarkably, moral and ethical social behavior can be translated into complex computer algorithms. Social scientists and programmers at Stanford are working together to develop an accurate driverless technology. By making autonomous vehicles behave more like human drivers, social behavior algorithms will increase the safety of self-driving cars and cybersecurity in automotive industry in general.

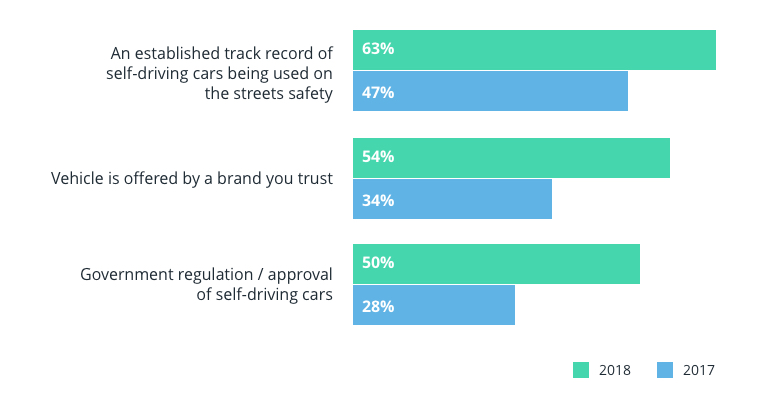

An established safety record can make people feel safer in autonomous vehicles

Research by Deloitte points out that consumers want an established safety record for autonomous vehicles. Event data recorders (EDRs) can serve as black boxes for autonomous cars — just like those used in planes to record crash information. These black boxes may prove drivers how safe autonomous vehicles are by recognizing the real reasons of an incident.

EDRs in autonomous cars would record data about the moment of a crash and several seconds before it, including the speed at the time of impact, when the brakes were activated, whether the driver took the wheel, and even whether the driver and passengers were wearing seatbelts.

Factors making customers feel better about riding in a self-driving car

But while an independent EDR might help to determine why a crash occurred, installing one in a self-driving car may cause more issues for the car manufacturer — primarily, data-related issues.

Who owns the data collected by an EDR and who can use it? The driver? The owner of the vehicle? The car manufacturer? The insurance company? And isn’t this type of recording a violation of a driver’s privacy? Currently, it looks like autonomous cars will carry EDRs only once car manufacturers and standards bodies settle on data collection and sharing standards.

Nevertheless, with the autonomous race taking off, not everyone is ready to uncover all events happened during each incident. Take Tesla, for example. After the fatal Model X crash, the company did not state why the Autopilot mistake occurred. Instead, Tesla blamed the driver for not taking control of the vehicle.

The company responded similarly in 2016 when a Model S in Autopilot mode collided with a truck. Tesla blamed the car’s braking instead of the Autopilot even though the National Transportation Safety Board proved that the automated system was partially responsible for the crash.

Driverless technology is still far from perfect. Autonomous cars tested in cities often make mistakes and get into accidents. But luckily, there are ways to make the vehicles safe on public roads.

Virtual simulation is a solution for safe testing of autonomous cars. Blockchain technology and software-defined perimeters increase a vehicle’s cybersecurity. Social behavior algorithms make autonomous driving safer for everyone by turning cars into more human-like drivers. Finally, established safety records can set the base for collaboration between industry players. This can result in making self-driving cars safer on the road and beyond.

If you have any questions related to autonomous driving safety, contact us. Together, we’ll find a way to make your solution safer.