Ironically, businesses aren’t getting better at making decisions even though they have more access to data and analytics tools than ever. Sixty-four percent of chief financial officers surveyed by Deloitte name inadequate technologies/systems as one of the three greatest challenges in turning data into insights.

You might ask: Aren’t data analytics budgets growing year over year? They are. But so is the volume, variety, and complexity of data.

Businesses are paying a lot for data infrastructure and business intelligence (BI) tools, but they often see a small return on investment (ROI) and a big list of complaints from end-users about the tools’ complexity, lengthy setup cycles, etc.

What if your team could write text-based questions instead of complex SQL queries? The combination of large language models (LLMs) and data analytics promises to commoditize access to analytics.

How LLMs and data analytics enable data-driven workflows

Traditional data analytics tools work with structured and numerical data. Large language models (LLMs), in turn, can interpret human language and extract sentiments, speech patterns, and specific topics from unstructured textual data.

By fusing LLMs with data analytics, businesses can use more data points, plus create a conversational interface to explore them.

How LLMs enhance data analytics

- Identify the most relevant information your teams need to do their job.

- Query extensive knowledge bases and analytical databases with natural language.

- Generate summarized answers, trend predictions, and next-best action recommendations.

- Explain complex concepts, trends, and outliers in data.

It’s quite possible that you have already asked ChatGPT to perform some analytics tasks in your industry and had mixed results.

General-purpose LLMs like Bidirectional Encoder Representations from Transformers (BERT) and Generative Pre-trained Transformer (GPT) weren’t explicitly trained to run route optimization scenarios for different types of electric vehicles (EVs), for example. But thanks to various model fine-tuning techniques, you can teach general-purpose models to:

- Better understand the jargon in your industry (e.g., financial or legal terms) to analyze complex documents

- Fetch knowledge from a specific dataset (e.g., anonymized CRM data) to provide relevant, data-backed answers

- Generate answers in different formats — text, video, images — based on the user prompt

Effectively, fine-tuned LLMs help accomplish two things for data analytics: Improve access to different data assets through a conversational interface and help deliver more comprehensive, contextually relevant insights.

That’s exactly how many data-driven companies already use LLMs. Colgate-Palmolive, for example, uses generative AI to synthesize consumer and shopper insights and better capture consumer sentiment. Morgan Stanley has launched an AI workforce assistant, which can handle a range of research inquiries (What’s the projected interest rate increase in April 2024?) and general admin queries (How can I open a new IRA account?).

According to a 2023 global study by Amazon Web Services, 80% of chief data officers expect that generative AI will positively transform their organization’s business environment — and for some good reasons.

Benefits of combining LLMs and data analytics

By augmenting existing business analytics processes with LLMs, teams across functions can get answers to questions that previously required at least one business analyst or data scientist. Advantages of using LLMs for data analytics include:

- Reduced learning curve. With LLMs, there’s no need for SQL queries or half-day training for junior staff. Teams can run an analytics command with a simple text prompt.

- Personalized reports. LLMs can slice and dice available data to create short summaries, provide segmented insights, or recommend next steps.

- Innovative insights. Combined with machine learning methods for processing structured data, LLMs can discover hidden patterns, nascent trends, and subtle correlations in data.

- Cost efficiency. Operationalize more datasets without spending extra on expensive software licenses or specialists to set up yet another analytics dashboard.

- Scalability. Embedding extra functions into pre-trained LLMs allows them to support a broader range of use cases and is simpler than implementing another business analytics (BI) tool.

- Content generation. Custom reports based on text-based user queries or ad-hoc data visualizations are also great tasks for LLMs in data analytics.

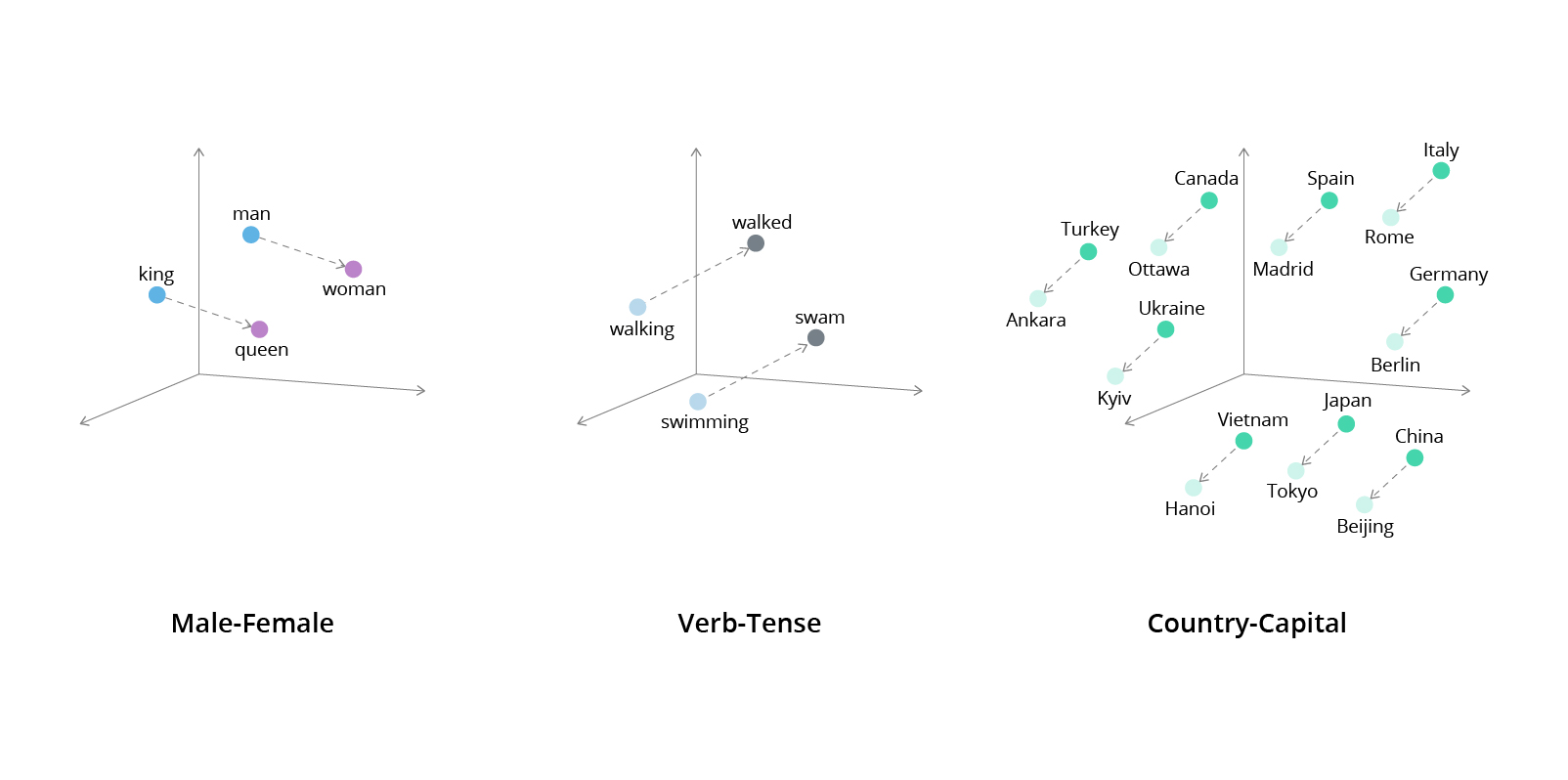

Unlike regular data analytics and machine learning models, LLMs can perform semantic search, taking into account the meaning and intention behind a user’s query to provide a more relevant answer. For that, the models employ embeddings: vector-based representations of relationships between words, phrases, and data points. Thanks to embeddings, LLMs can locate data points that closely relate to the user’s intent and return nuanced and context-aware answers. Effectively, LLMs can transform vast amounts of textual data into precise insights, plus combine this information with numerical data to provide more comprehensive information to users.

6 viable combinations of LLMs with data analytics

So, what exactly can LLMs do for data analysis? Let’s take a look at several labor-intensive knowledge tasks companies can streamline:

- Customer sentiment analysis: LLMs can detect nuances in textual data and interpret the semantics of written content at a massive scale.

- Sales analytics. Instead of relying on dashboards and SQL queries, business analysts can interact with CRM, ERP, and other data sources via a conversational interface.

- Market intelligence. By combining textual and numerical data, business analysts can identify nascent trends, patterns, and potential growth opportunities.

- Sustainability reporting. LLMs can facilitate data extraction and management and can be configured for automatic document generation and/or validation.

- Due diligence. LLMs help better identify risks, inconsistencies, and critical insights that leaders need to know to make better deals.

- Fraud investigation. With LLMs, analysts get high-precision anomaly detection capabilities and conversational assistance in investigation efforts.

Customer sentiment analysis

LLMs like GPT and BERT can perform semantic searches. This can make a huge difference in sentiment analysis, revealing whether a customer thinks your product is “terrible” versus “terribly awesome!”

Open-source large language models are already rather good at scanning for context but they can further improve information extraction by using vector databases.

Vector databases convert unstructured data (such as texts) into mathematical vectors that indicate word meanings and semantic relationships between them (known as word embeddings). This helps the model better distinguish the intent behind a user’s query and generate more accurate results. For example, a vector database can help to ensure that your customer is talking about Java the programming language and not Java the Indonesian island.

Source: Semantic Scholar

A pair of words that possess similar meanings or occur frequently in similar contexts will share a vector relationship.

To improve LLM performance, you can create custom embeddings for your domain or use a pre-trained open-source model like Word2Vec, GTE-Base, RoBERTa, or MPNet V2. By fusing LLMs and business analytics tools, you can get 360-degree insights based on structured data points (NPS scores) and unstructured data points (text complaints). For example, Intellias recently helped a client deploy a brand performance tracker that combines NLP, generative AI, and traditional data analytics methods.

The business solution can collect data from external sources — social media, blog posts, reviews, etc. — and transform it into accurate reputational indices. As a result, the brand marketing team no longer needs to manually review media coverage to assess the impact of recent promotional campaigns. Instead, they can get condensed and prioritized data from a GPT model, along with accurate assessments of recent brand mentions from all around the web.

What’s more, you can program an LLM to collect sentiment from mediums like images, audio, or video. Sonnet, for example, is fine-tuning the GPT-4 model to pick up important insights from voice and video conversations related to sales deals, customer demos, user interviews, or even morning standups. The ultimate goal is to create a conversational assistant that can deliver insights on what customers resonate with, or complain about.

Sales analytics

Almost unanimously, business leaders agree that data drives better decisions, and yet a recent Salesforce survey has found that only 33% use data to decide on pricing in line with economic conditions. And no, it’s not that teams lack business intelligence tools in the first place. What they lack is the ability to interpret available data.

Lack of understanding of data because it is too complex or not accessible enough and the lack of ability to generate insights from data are the two main barriers to unlocking more value from data.

Unlike humans, LLMs are great at transforming mathematical formulas and endless rows of numbers into easily digestible text summaries. Instead of staring at yet another bar chart, comparing numbers in Excel, or writing complex SQL queries to analyze data from an ERP system, your sales team can just ask an LLM chatbot with RAG to generate a report for sales product X or calculate the average customer lifetime value for all users acquired since January 2020.

And that’s exactly what many companies already do. Findly has created a GenAI assistant for interpreting Google 4 analytics data. Instead of fiddling with filters, users can write a quick message in Slack and Findly will look at anything from the latest traffic stats to the most important user acquisition metrics, broken down by language. Einstein GPT from Salesforce lets users interact with CRM data using natural language.

Effectively, a fine-tuned LLM for data analytics tasks can act as a tireless on-demand business analyst for your sales team, supplying them with insights on new trends in customer spending, average order values, sales volumes for different product groups, active discount policies, and anything else that can be gleaned from the available data.

Market intelligence

Apart from delivering hyper-granular insights, LLMs also help companies make better sense of wider market trends. For example, they can help with tasks such as identifying trends, detecting regulatory changes, analyzing product portfolios, and benchmarking competitors. For such broad analysis, AI teams often rely on graph databases.

Graph databases record data as a spoke network of relationships (sets of interconnected data points, or ordered pairs). An ordered pair has two parts: vertices (nodes) and edges (links). A graph can contain any number of such ordered pairs to represent complex semantic relationships in data.

Graph databases help organize information in a refined format, allowing for more relevant and granular search results, similar to vector databases. Using word embeddings in vector databases may result in precise answers, but the model may lose global context.

In a graph database, nodes represent word concepts, and edges represent the relationships between them.

For example, when asked about the top business competitors, an LLM could give solid data on each competitor individually but fail to benchmark competitors against each other. Because graph structures capture relationships in data, they help models generate more nuanced answers that factor in different variables. Graph databases also support faster data querying, as it is easier for computing systems to traverse relationship graphs and operationalize different vector combinations.

Knowledge graphs also help algorithms discover new relationships in data, which enables advanced predictive analytics scenarios. Take it from the London Stock Exchange Group (LSEG), a global provider of financial market intelligence. The company boasts a host of advanced products for quantitative analytics, financial modeling, yield analysis, and mutual performance data analytics.

One of their key products, the StarMine Mergers and Acquisition Target Model (M&A Target), predicts the likelihood of public company acquisitions using a combination of machine learning techniques and natural language processing (NLP). LSEG’s data science team fine-tuned a BERT model using historical M&A records from the Reuters News Archive (RNA) service to enable deeper market analysis.

To ensure high accuracy, the team used techniques like named entity replacement to remove sensitive information from the training data (such as company names and locations). Doing so prevents the model from associating an offhand mention of a company with previous M&A events, resulting in more accurate predictions. The team also applied other data pre-processing steps to properly map the relationships between data points and ensure high model accuracy in predicting M&A targets.

Effectively, StarMine’s model can process both structured information (financial statements, corporate actions, deal information) and unstructured insights (news coverage). Then, it can synthesize the obtained data to make accurate financial predictions. LSEG believes this is a more advantageous approach than solely using fundamental data. Unstructured insights provide richer context and expanded coverage of data. Users can query LLM with text on M&A targets for over 38,000 public companies globally and ask it to resurface specific data for analysis.

Sustainability reporting

Business leaders have a laudable commitment to environmental, social, and governance (ESG) targets. Almost every investor report these days bears a mention of planned carbon footprint reduction or a greater degree of inclusion in the workplace. Regulations in the EU, the UK, and a number of other countries and regions have made ESG disclosure mandatory for many publicly traded companies.

However, the regulatory landscape is highly fragmented, with multiple reporting frameworks. Unsurprisingly, 75% of ESG teams name data collection as the key challenge for implementing their sustainability strategy. The absence of standardization in ESG reporting forces teams to spend more time on compliance instead of advancing with planned initiatives.

A lot of ESG insights are buried in unstructured data sources, and that’s yet another great use case for combining LLMs and business analytics tools. Briink has created a product to facilitate data extraction with LLMs. Fine-tuned on a proprietary dataset, Briink’s generative AI assistant can auto-fill ESG questionnaire data using information obtained from company documents. It also provides source references for human workers to cross-check automatically reported information. Additionally, the model can analyze submitted documents (such as current vendor agreements) for gaps in compliance. For example, it can ensure that the current corporate policy aligns with the EU Pay Transparency Directive.

A group of Chinese researchers has proposed an effective framework for extracting ESG data from corporate reports using retrieval augmented generation (RAG) techniques. The team tested the fine-tuned version of the GPT-4 model on a dataset of ESG reports from 166 companies listed on the Hong Kong Stock Exchange, achieving an accuracy rate of 76.9% in data extraction and 83.7% in disclosure analysis, which was above that of previous reference models.

Due diligence

Similar to ESG disclosures, due diligence requires multi-dimensional analysis of textual, visual, and numerical data. Traditionally, M&A teams need to go through many (sometimes physical) folders of documents, containing all sorts of financial reports and statements. But given current economic uncertainty, relying on historical financial insights isn’t enough. Increased scrutiny from regulators, governments, and investors has led to a 30% increase in the average time for closing an M&A lead over the past decade.

According to Deloitte, in 2024, 79% of corporate leaders and 86% of private equity leaders expect an increase in deal volume over the next 12 months, which means even more work for supporting teams. These teams are also increasingly focused on accurate deal valuation, and that’s a great task for LLMs.

DeepSearch Labs specializes in custom LLMs for business insights. The company has developed proprietary search engine technology, powered by knowledge graphs, that can be fine-tuned for different use cases including asset valuations. Through a conversational interface, users can obtain data on major market trends, sub-trends, and outliers in the analyzed dataset. One current user claims that DeepSearch Lab delivered the same level of due diligence insights on a recent valuation target as hired consultants, but for a fraction of the cost.

Thomson Reuters recently launched an AI-powered Document Intelligence platform, which can come in handy at later deal stages. The company says its legal staff spent over 15,000 hours providing feedback and fine-tuning the model to ensure its top performance. The platform enables legal teams to automatically locate important contractual information (indemnities, special clauses, etc.) and analyze their meaning, intent, and context to ensure that nothing important gets overlooked.

Fraud investigation

In the UK alone, over £1.2 billion was lost to fraud in 2023, with 80% of fraud cases beginning online. Although process digitization eliminated a good degree of simple financial fraud cases, cyber criminals have gotten better at covering their tracks and engineering more elaborate attacks.

Anti-fraud and cybersecurity teams, in turn, feel pressured by the mounting volume of work while also being severely understaffed. Gartner expects that by 2025, half of significant cyber incidents will happen because of talent shortages.

Although AI cannot fully replace human analysts, it can make teams more productive by removing menial tasks. Lucinity has created an AI copilot called Luci for fraud case investigation. Using transnational data from connected sources, Luci helps analysts investigate suspected fraud by providing a quick summary of a detected incident. Instead of opening multiple tabs, an analyst can get all the data they need to run a check right in Luci. Apart from analyzing transactional data and security signals, Luci can also scan corporate reports and multimedia files to discover extra insights for the team (for example, recent trading activity with a sanctioned entity).

SymphonyAI has a similar GenAI assistant that can be used for a broader range of tasks, from KYC and CDD to AML investigations. Powered by Microsoft Azure OpenAI Service, the assistant can collect, collate, and summarize different data signals associated with fraudulent behavior. Users can slice and dice available data using a natural language interface to confirm their suspicions, request a summary of key risk factors for any monitored business, and automatically generate suspicious activity reports (SARs). SymphonyAI expects that the integration of LLMs into fraud investigation workflows will result in 60% less time and 70% less effort on the part of human investigators.

Looking ahead at the potential of LLMs and data analytics

While 2023 was the year of large foundation models, 2024 will be the year of smaller domain-specific LLMs, fine-tuned to address specific business problems. These fine-tuned LLMs might perform highly contextual sentiment analysis or provide data-driven instructions for fraud analysis. When asked in September 2023, 62% of machine learning teams said they plan to have an LLM-based application in production within a year.

While general-purpose LLMs have proven to be a security and privacy nuisance, custom fine-tuned models can be trained in a more isolated environment. They can operationalize a large volume of proprietary, non-public, and regulated data using different privacy-preserving techniques. In other words, they are paving the way for obtaining more business insights with LLMs.

At the same time, fine-tuned domain-specific models can become a separate product line for your business. In the same way that banks and FinTech companies started to rent out core banking systems as a service, we are already seeing larger companies (Amazon, Microsoft, IBM) distributing API-based access to open-source foundation models. More players with domain-specific offerings are already in the market, and more are likely to emerge in the next several years. The potential of combining LLMs and data analytics is still largely untapped, and the field is ripe for innovation.

Your AI journey starts with Intellias

As a leading consultancy firm with AI at its core, Intellias invites businesses to embark on their AI transformation journey. Our end-to-end solutions, tailored to meet the unique needs of each partner, guarantee not just technological advancement but also a strategic edge in the marketplace. Contact us today to unlock the full potential of AI and data analytics for your business.