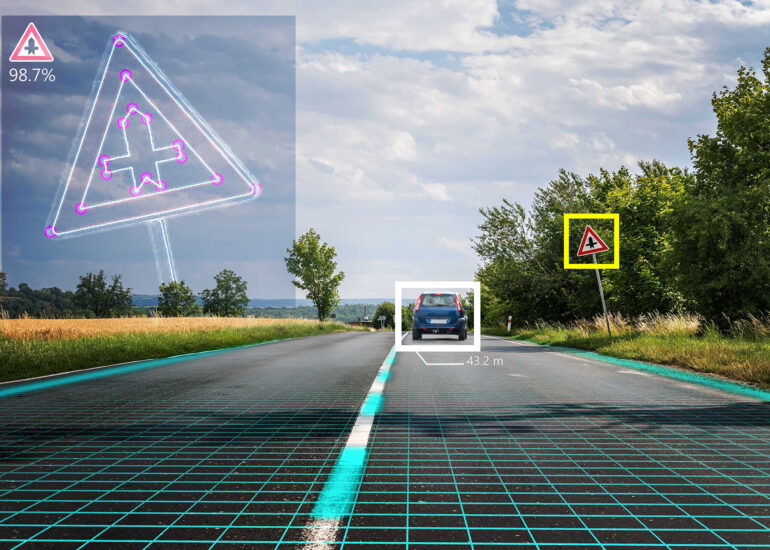

Autonomous vehicles can get into many different situations on the road. If drivers are going to entrust their lives to self-driving cars, they need to be sure that these cars will be ready for the craziest of situations. What’s more, a car should react to these situations better than a human driver would. A car can’t be limited to handling a few basic scenarios. A car has to learn and adapt to the ever-changing behavior of other vehicles around it. Machine learning algorithms and deep learning in self-driving cars make autonomous vehicles capable of making decisions in real time. This increases safety and trust in autonomous cars.

Read an overview of the most popular machine learning algorithms for autonomous driving to find out what they do and why they matter for a fully driverless future.

How are machine learning algorithms used for autonomous driving?

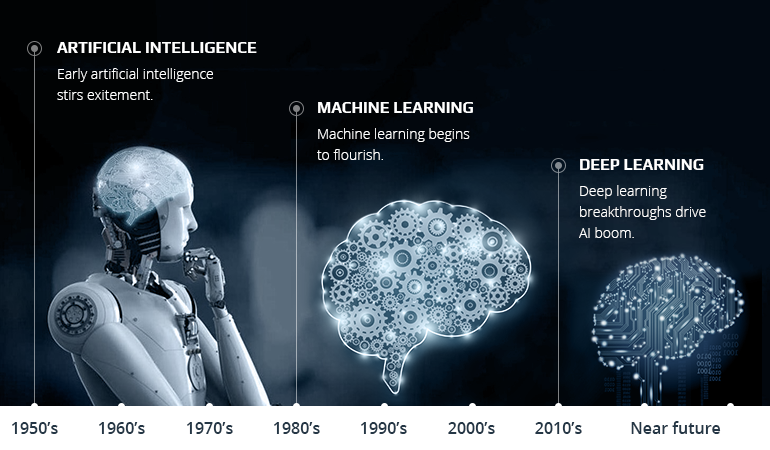

Machine learning is a subset of artificial intelligence. It focuses on improving how a machine performs some task. Here’s the most important part: learning means that the machine goes beyond the training data. Equipped with machine learning algorithms, a computer can apply induction and form knowledge structures. In other words, where traditional programming fails, custom software development powered by machine learning and artificial intelligence can succeed.

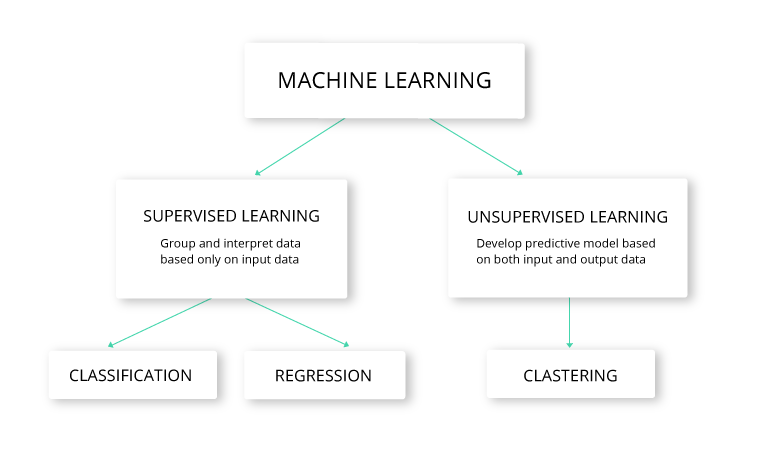

Machine learning in autonomous driving can be supervised or unsupervised. The main difference between the two options lies in the amount of human input required for learning. In supervised learning, a computer interprets data and makes predictions based on input data, then compares those predications to correct output data in order to improve future predictions. In unsupervised learning, data isn’t labeled. So the computer learns to recognize the inherent structure based on input data only.

Supervised learning versus unsupervised learning within the machine learning in autonomous driving

Today, machine learning is among the hottest technologies for autonomous driving. Particularly, deep learning in self-driving cars is getting increasingly popular. Deep learning is a class of machine learning that focuses on computer learning from real-world data using feature learning. Thanks to deep learning, a car can turn raw big data self driving cars into actionable information.

History of self-driving cars in machine learning development

The appearance of self-driving cars in machine learning applications boosts the development of both automotive and technology domains.

Applications of machine learning in self-driving cars include:

- localization in space and mapping

- sensor fusion and scene comprehension

- navigation and movement planning

- evaluation of a driver’s state and recognition of a driver’s behavior patterns

What are the common machine learning algorithms used in autonomous driving?

Let’s take a deeper look at the application of deep learning and computer vision for self-driving cars.

The machine learning algorithms used in self-driving cars

Scale-invariant feature transform (SIFT)

Imagine a car hiding behind a tree. Could an autonomous vehicle detect it? Yes, if it used SIFT. Scale-invariant feature transform allows image matching and object recognition for partially visible objects. The algorithm uses an image database to extract salient points (i.e. keypoints) of an object. Those points are features of the object that don’t change with scaling, rotation, clutter, or noise.

In a self-driving car, machine learning algorithms compare every new image with the SIFT features that it has already extracted from the database. It detects correspondence between them to identify objects. For instance, when an autonomous car sees a triangular road sign, it takes its three corners as keypoints. If a triangular road sign were damaged and bent back, a self-driving vehicle using SIFT would still recognize it based on its inherent features.

Feature extraction with SIFT

AdaBoost

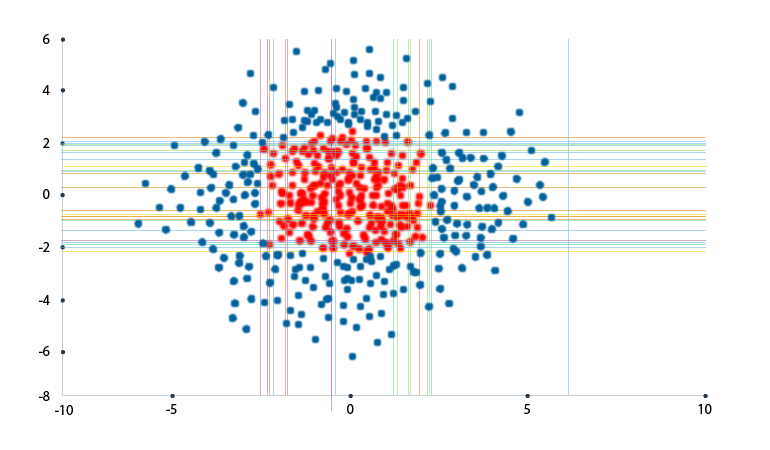

Any engineer developing a self-driving car project using machine learning should have worked with AdaBoost. AdaBoost is a decision matrix algorithm that ensures the adaptive boosting of learners. In essence, it takes the output of other regression and classification algorithms and checks how their performance corresponds to successful predictions.

AdaBoost combines and adapts the performance of multiple algorithms so they work together and complement each other. Chances are that individual algorithm will perform poorly, but their combined performance can contribute to better learning.

Let’s imagine there are several learners: A, B, C, and D. A and B look at the same criteria, but A performs better. Meanwhile, C’s performance is worse than either A’s or B’s, but it evaluates completely different criteria. This means that C together with A can provide a better output than A plus B (and without C). D’s predictions may be completely out of touch and fail most of the time. But its output could still be useful for the entire system. AdaBoost combines many weak classifiers to obtain one strong classifier.

Data classification using AdaBoost

AdaBoost allows for more accurate decision-making and object detection in autonomous vehicles. It’s especially useful for face, pedestrian, and vehicle detection.

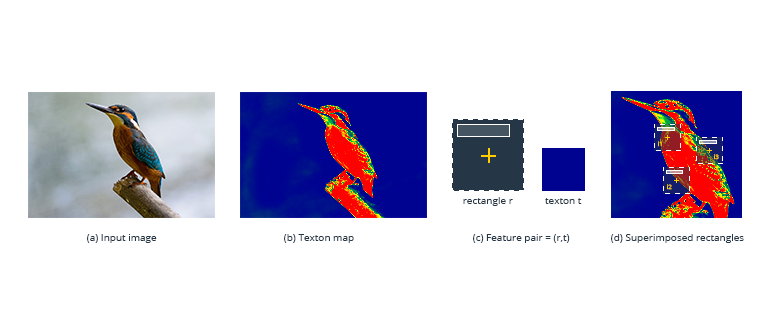

TextonBoost

Just like AdaBoost, TextonBoost combines weak learners to produce strong learners. It boosts image recognition based on the labelling of textons. Textons are clusters of visual data that have the same characteristics and respond to filters in the same way.

In a self-driving car project using machine learning, the TextonBoost algorithm brings together information from three sources: appearance, shape, and context. This application of deep learning in autonomous vehicles is brilliant because individually these sources may not lead to accurate results. To put it simply, sometimes an object’s appearance alone isn’t enough to label it correctly.

TextonBoost combines several classifiers to produce the most accurate object recognition. It looks at the image as a whole and captures its characteristics in relation to each other. Take a look at the images below, for example.

First, the computer creates a texton map of the image. Then it pairs features with textons and learns from contextual information. In this case, it learns that “cow” pixels are usually surrounded by “grass” pixels.

Objects recognition with TextonBoost

TextonBoost actually enables deep learning cars to more accurately recognize objects. Thanks to the machine learning algorithm, autonomous vehicles get better at detecting and identifying objects.

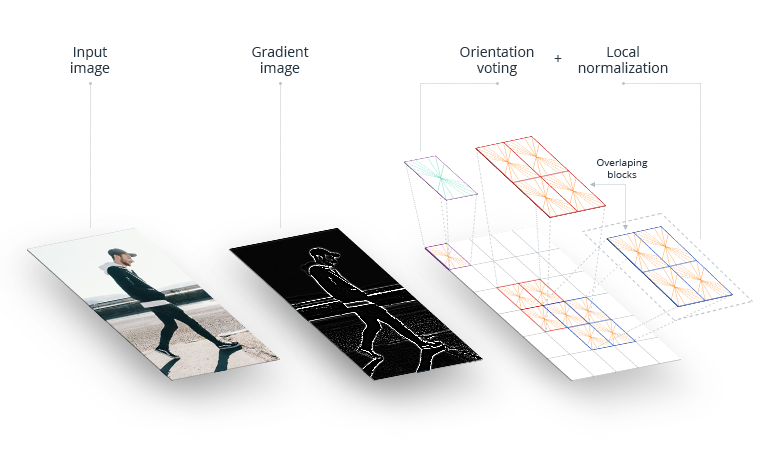

Histogram of oriented gradients (HOG)

Histogram of oriented gradients (HOG) is one of the most basic machine learning algorithms for autonomous driving and computer vision. It analyzes a region of an image, called a cell, to see how and in what direction the intensity of the image changes. HOG connects computed gradients from each cell and counts how many times each direction occurs. After that, these features are passed down to the Support Vector Machine (SVM) for classification.

Basically, HOG describes images as distributions of image intensity. It creates a coded and compressed version of an image that’s not just a bunch of pixels but a useful image gradient. Moreover, it’s inexpensive in terms of system resources. Self-driving cars can benefit from HOG as it can be a powerful initial step in the image recognition sequence.

Steps of the HOG algorithm

What’s interesting is that HOG nails human detection. This area is problematic without the application of machine learning in autonomous vehicles because of the different appearances people have and the variety of poses they can take. Remarkably, the HOG algorithm solves this issue for driverless vehicles.

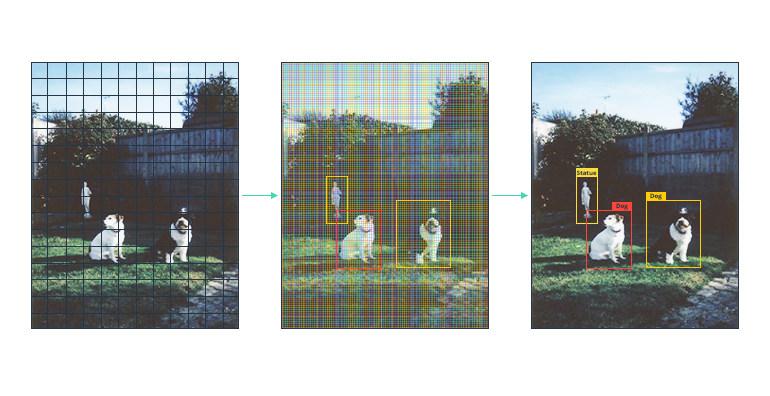

YOLO

YOLO (You Only Look Once) is an autonomous driving machine learning algorithm for classifying objects such as cars, people, and trees. In fact, it’s an alternative algorithm to HOG. YOLO analyzes the image as a whole and divides it into segments. Since each class of objects possesses a set of features, YOLO labels objects according to them.

The algorithm comes up with bounding boxes and predictions for each image segment. It considers each prediction in the context of the whole image and applies network evaluation only once. By contrast, other detection algorithms apply detectors and classifiers to multiple positions and regions of an image. That’s why YOLO is more accurate and faster than HOG. The YOLO algorithm is a great tool for object detection in autonomous vehicles. It ensures quick processing and vehicle response to real-world situations.

YOLO algorithm used for object detection

Autonomous vehicles and machine learning will drive the future of transportation

A self-driving car project using machine learning is a powerful technology. Self-driving cars and machine learning will define the future of the transportation industry. And it’s no secret that they’re a perfect match. Machine learning algorithms are most commonly used in autonomous vehicles for perception and decision-making. But there are a lot more algorithms and possibilities to discover for self-driving cars using machine learning. For instance, you can even apply machine learning to autonomous navigation and recognition of a driver’s state.

Today, there are plenty of capabilities of applying machine learning for self-driving cars. And they’ll be capable of even more in future. So when vehicles become fully autonomous, you’ll know exactly what drove the change.

Ask our automotive experts at Intellias about other machine learning algorithms used in autonomous vehicles.