Agentic AI is spreading across functions, budgets, and industries with or without a formal strategy in place. Companies find themselves in a position where enterprise adoption of AI agents in 2026 is a necessity, yet most organizations struggle to move from pilot to production. The gap between “exploring agents” and a working enterprise AI adoption framework is wide and hard to ignore.

What are the enterprise adoption trends for agentic AI in 2026?

According to Deloitte’s 2026 State of AI in the Enterprise report, surveyed across 3,200+ business and IT leaders globally, two-thirds of organizations have already seen productivity and efficiency gains from AI, and 34% are using it to deeply transform their businesses rather than optimizing already existing processes. Nearly three in four companies plan to deploy agentic AI within two years, and many are already running it across multiple functions. For most, the starting point is engineering, as AI accelerates prototyping, code generation, and testing. From there, enterprise adoption of AI agents has expanded into Sales & Marketing, Operations, ITSM, and HR.

How companies structure this varies, but a common pattern is emerging: a central orchestration layer that users interact with through a single interface, routing work to specialized agents behind the scenes. For workflows with multiple decision points, those agents connect into agentic workflows built on enterprise AI platforms like Microsoft Azure AI Foundry and Copilot Studio. Built-in suite agents from platforms like Microsoft, HubSpot, and Atlassian add another layer, driving workflow improvement at the team level without requiring custom builds.

What’s an agent’s job vs a human’s job

Every agentic AI strategy starts with a job to be done — an idea that comes from inside the team, from product research, from peers, or from customers. The starting question is whether this is a human job, involving responsibility and consequential decisions, or an agent job that exists to support those decisions.

The design philosophy is that agents handle tasks of lower cognitive value, operating under a clear responsibility threshold and people make decisions. Agents build the environment that enables them to do so faster and with better information, freeing teams for high-value differentiating tasks.

The impact tends to be measurable: faster delivery, better accuracy, more time for work that requires human judgment. But agents also surface problems organizations would rather not confront, such as poor data quality, broken integrations, or outdated documentation. In that sense, they push organizations to address the enterprise data layer and the technical debt that has been deferred.

Once that distinction is clear, a quick check follows:

- data quality

- availability

- architecture fit

- technical feasibility.

If that passes, the idea moves forward as a delivery candidate — the first step in any serious AI agents and their enterprise adoption.

From there, move fast. Pick up candidates with internal teams from relevant departments, build an MVP, and add users at the proof-of-concept stage. This AI agent piloting approach, combined with AI-enabled engineering services, makes it possible to fail on hands-on solutions rather than on concepts at extremely low cost.

The principle here is: test every idea, but only scale investments.

Measuring ROI and productivity of enterprise AI agents

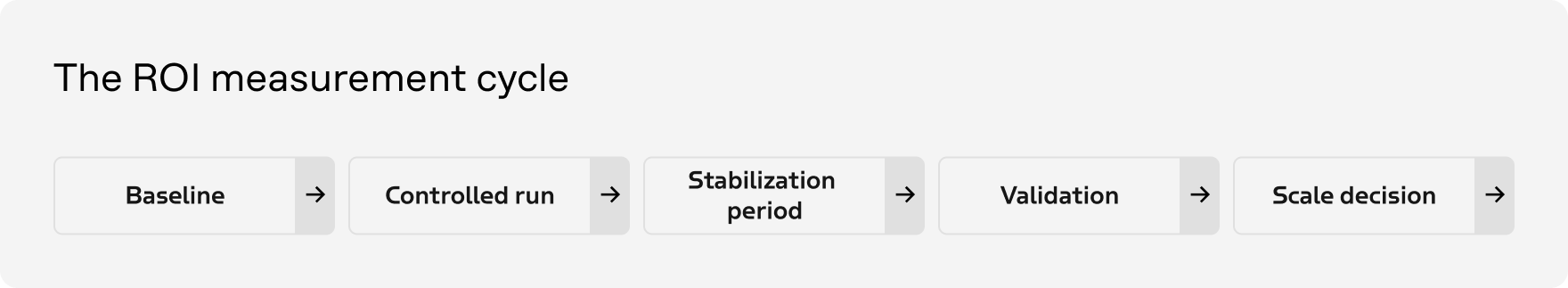

Before any agent goes live, establish a baseline: end-to-end cycle time, human effort required, rework and error rates, cost per completed case. Then run the agent in a controlled environment and track those same indicators across a comparable volume of work. Such an incremental approach separates disciplined agentic AI enterprise adoption from hype-driven rollouts.

The temptation to declare success early is also a trap. The first weeks are tuning and stabilization, therefore, early numbers are a signal, but never a proof. Validation comes after the solution has operated under normal conditions, the novelty effect has faded, and the process has settled. At that point, you can ask whether the gains hold, whether quality is actually maintained and most important whether the organization is really relying on the agent or compensating with extra manual work on the side.

The mark of agentic scaling done right is consistent improvement over multiple months, with no degradation in outcomes. Anything that only looks good inside a short pilot window is a demo, not a solution.

The three obstacles a CIO can hit

Mindset before tooling

The hardest shift is organizational context. For years, CIOs have operated under constant operational pressure, negative budgets, and demand that always outpaces capacity.

Agentic AI changes that equation. Delivery cycles that once took weeks can now take days, which means more ideas can be tested and more prototypes can compete. But most teams aren’t ready for that shift. Instead of engaging AI specialists, engineers, and citizen developers as broadly as possible, they fall back into fitting work into this month’s budgeted man-days. The battle of concepts has to give way to the battle of prototypes and that requires structural shifts in how teams think about their own capacity.

The legacy pit

Once the mindset shift is underway, the next obstacle is infrastructure debt. Agents don’t fix data silos or integration problems — they amplify them. Without a reliable enterprise data layer and stable system connections, agent sophistication counts for little. The IT strategy that seemed sufficient before agentic AI became central isn’t good enough, it has to be delivered fully, this calendar year.

Governance at scale

Once agents spread across teams, visibility gaps follow. Shadow deployments, unclear ownership, misconfigured access, privilege escalation through agent handoffs, data leakage through ungoverned agentic workflows.

Agentic AI risk management and security can’t be retrofitted after the fact. Establishing accountability structures before they’re urgently needed is far cheaper than rebuilding trust after something goes wrong. This is especially true of multi-agent systems and their architectures, where complexity and risk multiply across every handoff.

How the role of a CIO is changing

The enterprise adoption trends for agentic AI point to one consistent pattern: the CIO role is being redefined by the very tools it oversees. The black box model — gatekeeping technology decisions — is gone.

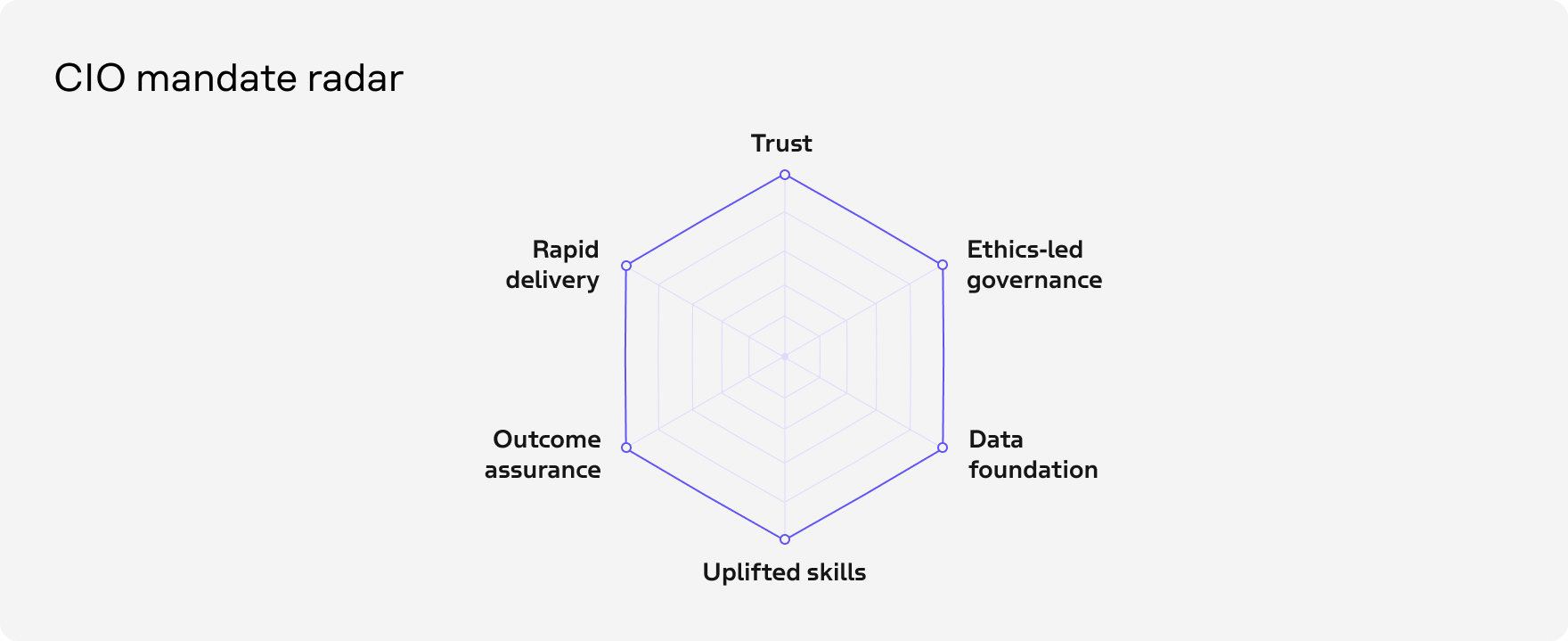

Maintaining trust across the organization becomes the core function. That means:

- building AI awareness and workforce engagement at every level

- defining governance driven by ethics rather than cost

- creating an environment where people can build with agentic AI safely

- closing technical debt decisively

- delivering consistently.

Ensuring that business decisions made with AI’s help lead to genuinely positive outcomes is a different kind of responsibility. Therefore, maybe the I in CIO no longer stands for Information, but for Intelligence.

The mark of agentic scaling done right is consistent improvement over multiple months, with no degradation in outcomes. Anything that only looks good inside a short pilot window is a demo, not a solution.

What to know before you start

Invisible work doesn’t get trusted

The first wave of autonomous enterprise workflows often executes complex tasks in the background and delivers clean, actionable results, but users push back anyway. The reason is that if the process isn’t visible, people can’t place their confidence in it.

The shift from human-executed to fully autonomous solutions can happen too fast, leaving people without a clear sense of where they stand. Building frontends, dashboards, and clear interaction points may help deal with this challenge. Let every agent be launched manually and make outputs correctable. Additionally, define each step.

AI is probably already in your organization

When you look closely at how people are working, you’ll find agentic AI use already embedded in team habits. They adopt tools without waiting for a strategy, a backlog, or a dedicated unit, so the task of leadership isn’t to introduce AI from above; it’s to find what’s already there and tend to it. This organic agentification is a signal of workforce engagement, not a governance problem.

Foundations decide outcomes

Cloud, data, integrations, and clear policies mark the line between a transformation that sticks and one that stalls at the pilot stage. Configurability and adoption models matter less than the quality of what sits underneath them. An IT strategy is only as good as its execution, and that execution must be held regardless of budget pressure. This is the agentic AI tech stack reality that is rarely emphasized.

Design the human-agent interface before you deploy

Whether to use a single orchestrator that catches intent and activates agents, a semiautonomous personal agent, or a set of distinct agent personas — these are agentic AI strategy choices that belong in the planning phase. The interface between humans and agents shapes whether the agent gets used at all. The maturity curve of any autonomous AI agent rollout runs directly through this decision.

The principles hold across industries: agentic AI in banking, retail AI agents, AI agents for insurance, agentic AI for the airline industry. Agents extend human judgment. Keeping that distinction clear throughout the autonomous decision-making process is what separates successful deployments from expensive lessons.

Looking to deploy AI agents across your organization? Let Intellias help you build the foundation, governance, and workflows to make it stick.